The end result: an aesthetically interesting image I couldn’t imagine creating from scratch

Since I was 15 years old, I have been painting graffiti under bridges and in abandoned buildings. I grew up in San Francisco when street art was booming, and inspired by the colors and aesthetic, I looked for ways to create art and taught myself to paint. As I got older, I discovered the graffiti communities on Flickr, and began making an effort to meet artists where I lived and share photos of my work online. As Tumblr grew in popularity, the community moved. Then Instagram emerged, and the community moved again.

In recent years, I haven’t had the same leeway to paint in public. There was a greater cultural acceptance of street art when I lived abroad. Painting on walls was seen as beautification in areas where there was much demolition. When I moved back to the US, I started painting on larger canvases, and eventually moved toward spray cans and paint brushes.

Inspired by a project by Kawandeep Virdee, I photoshopped the paintings with motion blur filters, and modified the lighting effects. The result was a creative jumping-off point, enabling me to create a digitally inspired physical painting.

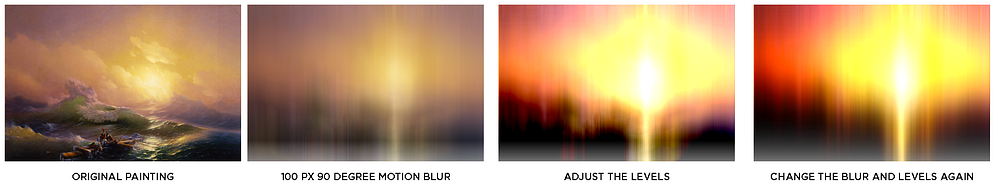

Last year, I started experimenting even more with digitally manipulated images, and their role in inspiring physical paintings. I began creating aesthetically beautiful images by taking classic paintings from the 18th and 19th century and running various photoshop filters over them. I found the color and contrast from these old paintings to be unmatched and beautiful.

I took the digital pieces I created and used them as the inspiration for painting new pieces by the classical paintings on a computer and then physically painting the remixed image.

I continued my interest in graffiti, again using the digital space as a canvas, and spent a few months building out various software tools that I thought would be useful for graffiti artists. After creating such a large library of literally millions of paintings, I realized I wanted to do something more than just browse the images, so I started exploring different techniques around machine learning.

I started teaching myself about the application of neural networks to do something called “style transfer,” which refers to the process of analyzing two images for the qualities that make the picture recognizable, then applying those qualities to another picture. This meant that I could replicate an image’s color, shapes, contrast, and various other features onto another. The most commonly recognized style transfer application is from Van Gogh’s “Starry Night” to any photograph.

Similar to my previous project of painting the digital sunset images, I processed pictures using the artistic style transfer algorithm and then painted them. Referring to the plethora of graffiti images I’d already collected, I used images of nature and processed them in the style of street art I thought looked interesting. The end result was an aesthetically interesting image I couldn’t imagine creating from scratch.

It’s been a few months since I’ve done anything with this technique of mixing images and painting them. I hope the process depicted above can be a source of inspiration for other programmer-painters who enjoy mixing both practices.

Below are a few examples of what an artist can create by combining street art images with photographs.